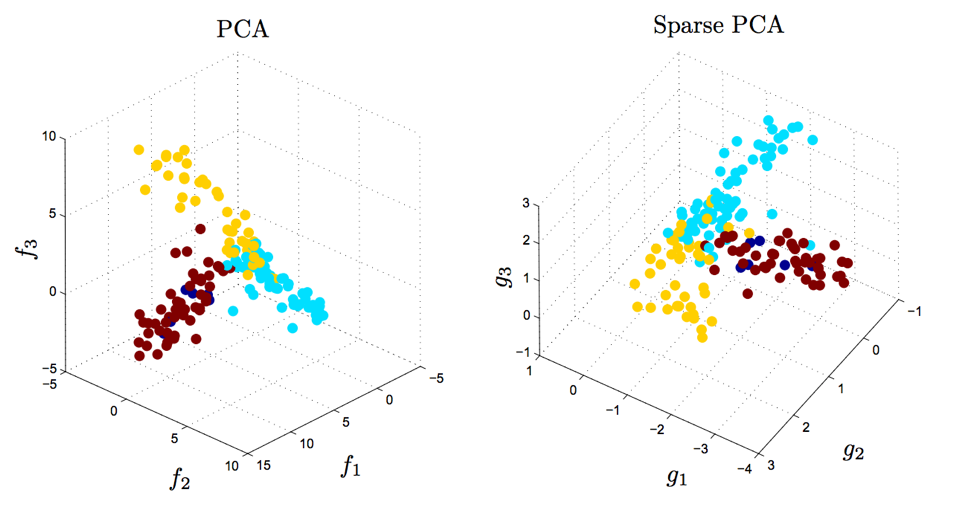

A possible application would be a pattern classification task, where we want to reduce the computational costs and the error of parameter estimation by reducing the number of dimensions of our feature space by extracting a subspace that describes our data “best”. Here, our desired outcome of the principal component analysis is to project a feature space (our dataset consisting of \(n\) \(d\)-dimensional samples) onto a smaller subspace that represents our data “well”. The main purposes of a principal component analysis are the analysis of data to identify patterns and finding patterns to reduce the dimensions of the dataset with minimal loss of information. Using the PCA() class from the composition library to confirm our results.Differences between the step by step approach and ().Using the PCA() class from the matplotlib.mlab library.Transforming the samples onto the new subspace

Choosing k eigenvectors with the largest eigenvalues Sorting the eigenvectors by decreasing eigenvalues Checking the eigenvector-eigenvalue calculation.Computing eigenvectors and corresponding eigenvalues b) Computing the Covariance Matrix (alternatively to the scatter matrix) Taking the whole dataset ignoring the class labels Why are we chosing a 3-dimensional sample?.Generating some 3-dimensional sample data.Principal Component Analysis (PCA) Vs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed